Computex 2021: GIGABYTE Server Updates MZ72-HB0 For Dual Socket 3rd Gen EPYC

by Gavin Bonshor on June 4, 2021 9:00 AM EST

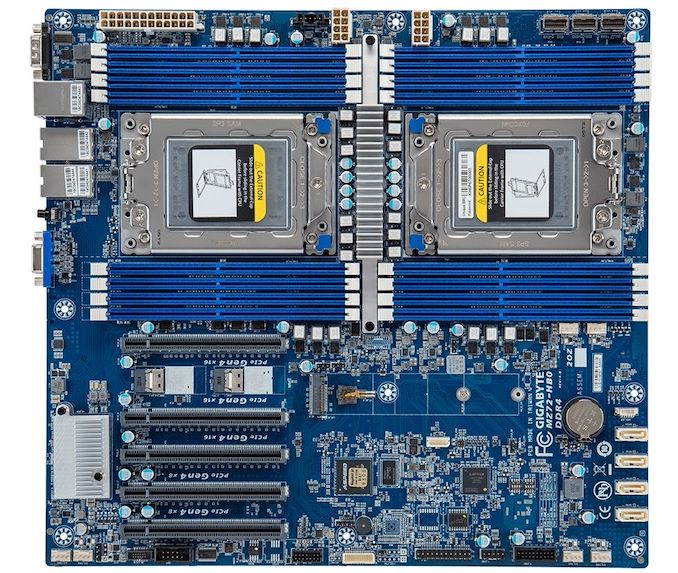

During Computex 2021 in Taipei, although the event is all-digital due to the Coronavirus pandemic, GIGABYTE Server has showcased its newly revised MZ72-HBO dual-socket motherboard with support for AMD's 3rd generation EPYC 7003 processors. The GIGABYTE Server MZ72-HBO boasts support for up to 128 cores and 256 threads (64c/128t per socket), dual 10 GbE Base-T Ethernet, up to 4 TB of DDR4-3200 memory, and five full-length PCIe 4.0 slots.

In the server workspace, the use case for high-core count processors includes data centers, cloud computing, and MPI parallel programming. This is where the GIGABYTE Server MZ72-HB0 comes in, with support for up to 280 W TDP chips. This means it can support a maximum of 128-cores and 256 threads of powerful Zen 3 EPYC 7003 goodness across both AMD SP3 sockets. Each of the SP3 sockets includes eight memory slots (sixteen in total), with support for up to 2 TB of DDR4-3200 memory per processor operating in eight-channel, with RDIMM, LRDIMM, and 3DS memory types all supported.

The GIGABYTE Server MZ72-HB0 Revision 3.0 boasts a wide variety of features, including lots of storage, with one PCIe 4.0 x4 M.2 slot, four 7-pin SATA ports, and three SlimSAS ports offering support for either twelve SATA or three PCIe 4.0 U.2 NVMe drives. Included on the board is an ASPEED AST2500 BMC controller, which allows access to GIGABYTE's Management Console (GMC), and includes a Gigabit Management LAN port for remote access. Other networking capability includes a pair of 10 GbE Base-T LAN ports powered by a Broadcom BCM57416 controller. On the lower portion of the board are five full-length PCIe 4.0 slots that can operate at x16/x16/x16/x8/x8, while I/O on the rear panel includes two USB 3.0, a COM port, and a D-sub video output for the BMC.

At the time of writing, we are unsure when the GIGABYTE Server MZ72-HB0 will be available at retail, however the company has started channel distributions and we actually have a review unit in-house on our Milan test-bench. However, the previous MZ72-HB0 (revision 1.0) model with support for EPYC 7002 processors retails between $700 and $900 depending on the retailer. Due to this, we expect the newer revision 3.0 model to fall in a similar price bracket.

Source: GIGABYTE Server

12 Comments

View All Comments

Chaitanya - Friday, June 4, 2021 - link

What connectors are sitting between 1st 2 PCI-E slots? Very odd locations if the cables are bulky.MenhirMike - Friday, June 4, 2021 - link

Looks like OCuLink.MirrorMax - Saturday, June 5, 2021 - link

Slimline sas sff 8654 4iThere's a total of 5 of these connectors those between PCI ports are angled so they don't interfer with pcie cards

MirrorMax - Saturday, June 5, 2021 - link

Why wasn't Milan support just a bios update to the rev1 board like most other Rome motherboards, i don't see anything new otherwise.SanX - Saturday, June 5, 2021 - link

Which mobo anyone recommend to make ~1000 core AMD EPIC-based supercomputer for floating point 3D simulations like particle-in-cell or molecular dynamics ?Rudde - Saturday, June 5, 2021 - link

I'm curious, is there any reason why EPYC is better than professional GPUs? (Or AVX512)spdcrzy - Sunday, June 6, 2021 - link

I'd go with full servers instead at that point. Gigabyte's 10xGPU 4U server is the obvious choice for supercomputer-level performance unless you choose to go directly with NVidia's DGX A100 - but that's a 6U package instead of the traditional 4U server. The Gigabyte solution has more expansion, though. It supports 128 cores and 10 GPUs along with 8x U.2 NVMe and 4x SATA/SAS drives as well as an OCP 3.0 slot for up to 2x200Gb networking. That gives you 1024 cores, 80 A100 GPUs, a total of up to 32TB of RAM using 128GB DIMMs, 100+ TB of raw U.2 NVMe scratch capacity (at potentially up to 25+ GB/s, which is knocking on the door of DDR4), another 60+ GB of SAS capacity, 400Gb/s interconnect, and a 5 year warranty, all for the cool, cool price of $1.6M and a peak power consumption of over 30kW. 8 DGX A100s would cost about the same, but have less scratch space and take up more room, as well as consume up to 70% more power. But keep in mind that a DGX is a completely self-contained unit, whereas a normal server like the Gigabyte would require additional (and very power hungry) network switches and other management hardware and software. If you're dealing with a dataset that's being accessed remotely, and all you want is plug and play, then the DGX is the winner. But if for whatever reason you need to keep the data in the machine itself, or if the dataset is truly enormous and requires multiple DWPD, then you might be better off with a more classic server like the Gigabyte, which is also expandable and upgradeable over time as better technology and updates are released.SanX - Thursday, June 10, 2021 - link

GPUs do not suit our purposes. Tests show poor performanceSanX - Thursday, June 10, 2021 - link

Same poor performance is with IBM processorsSADRULESXD - Monday, July 5, 2021 - link

will this be able to use 3090s? and any ram that uses 3200?