Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

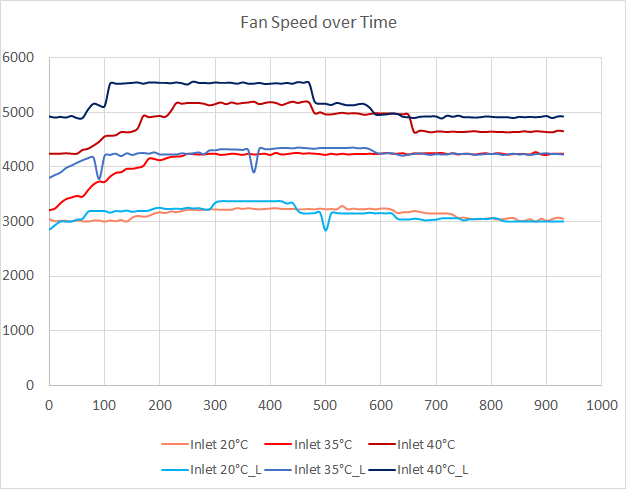

Fan Speed

How do these higher temperatures affect the fans?

It is clear that the fan speed algorithm takes more than just the CPU temperature and inlet temperature into account. There's definitely an ability to detect when a low power CPU with low tCase is used. As a result the fans are spinning faster with the 2650L than with the 2697 v2. That also means that the server has more headroom for the Xeon E5-2697 v2 than we first assumed based on the CPU temperature results. At higher inlet temperatures, the fans can still go a bit faster if necessary on the 2697, as the maximum fan RPM is 7000.

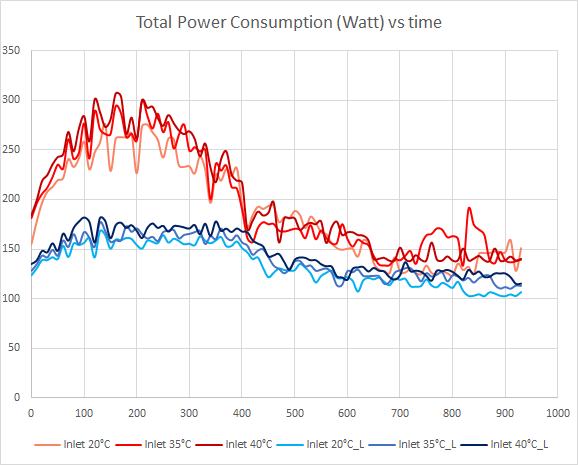

Power Measurements

The big question of course is how all this affects the power bill. It's no use saving on cooling if your server simply consumes a lot more power due to increased fan speeds (and potentially down time when replacing fans more frequently).

The difference in power consumed is not large between the three inlet temperatures. To make our measurements clear, we standarized on the measurements at 20°C as the baseline and created the following table:

| Xeon E5-2697 v2 | Xeon E5-2650L | |||||

|---|---|---|---|---|---|---|

| CPU load | Inlet 20°C | Inlet 35°C | Inlet 40°C | Inlet 20°C | Inlet 35°C | Inlet 40°C |

| 0-10 | 100% | 105% | 106% | 100% | 106% | 112% |

| 10-20 | 100% | 98% | 103% | 100% | 104% | 108% |

| 20-30 | 100% | 105% | 110% | 100% | 103% | 107% |

| 30-40 | 100% | 102% | 105% | 100% | 102% | 105% |

| 40-50 | 100% | 109% | 108% | 100% | 97% | 109% |

| 50-60 | 100% | 105% | 108% | 100% | 108% | 111% |

| 60-70 | 100% | 106% | 107% | 100% | 104% | 110% |

| 70-80 | 100% | 106% | 104% | 100% | 105% | 109% |

| 80-90 | N/A | N/A | N/A | 100% | 109% | 108% |

| Average | 105% | 107% | 103% | 109% | ||

As the fans work quite a bit harder to keep the 2650L below the low Tcase, they need a lot more power. We notice a 9% increase in power when the inlet temperature doubles. The increase is smaller with the Xeon E5, only 7%.

The most interesting conclusion is that raising the inlet temperature from 20 to 35°C results in almost no increase in power consumption (3-5%) on the server side, while the savings on cooling and ventilation can be substantial, around 40% or more.

48 Comments

View All Comments

lwatcdr - Thursday, February 20, 2014 - link

Here in south florida it would probably be cheaper. The water table is very high and many wells are only 35 feet deep.rrinker - Tuesday, February 11, 2014 - link

It's been done already. I know I've seen it in an article on new data centers in one industry publication or another.A museum near me recently drilled dozens of wells under their parking lot for geothermal cooling of the building. Being large with lots of glass area, it got unbearably hot during the summer months. Now, while it isn't as cool as you might set your home air conditioning, it is quite comfortable even on the hottest days, and the only energy is for the water pumps and fans. Plus it's better for the exhibits, reducing the yearly variation in temperature and humidity. Definitely a feasible approach for a data center.

noeldillabough - Tuesday, February 11, 2014 - link

I was actually talking about this today; the big cost for our data centers is Air Conditioning; what if we had a building up north (arctic) where the ground is alway frozen even in summer? Geothermal cooling for free, by pumping water through your "radiator".Not sure about the environmental impact this would do, but the emptiness that is the arctic might like a few data centers!

superflex - Wednesday, February 12, 2014 - link

The enviroweenies would scream about you defrosting the permafrost.Some slug or bacteria might become endangered.

evonitzer - Sunday, February 23, 2014 - link

Unfortunately, the cold areas are also devoid of people and therefore internet connections. You'll have to figure the cost of running fiber to your remote location, as well as how your distance might affect latency. If you go into permafrost area, there are additional complications as constructing on permafrost is a challenge. A datacenter high in the Mountains but close to population centers would seem a good compromise.fluxtatic - Wednesday, February 12, 2014 - link

I proposed this at work, but management stopped listening somewhere between me saying we'd need to put a trench through the warehouse floor to outside the building, and that I'd need a large, deep hole dug right next to building, where I would bury several hundred feet of copper pipe.I also considered using the river that's 20' from the office, but I'm not sure the city would like me pumping warm water into their river.

Varno - Tuesday, February 11, 2014 - link

You seem to be reporting on the junction temperature which is reported by most measurement programs rather than the cast temperature that is impossible to measure directly without interfering with the results. How have you accounted for this in your testing?JohanAnandtech - Tuesday, February 11, 2014 - link

Do you mean case temperature? We did measure the outlet temperature, but it was significantly lower than Junction temperature. For the Xeon 2697 v2, it was 39-40 °C at 35°C inlet, 45°C at 40°C inlet.Kristian Vättö - Tuesday, February 11, 2014 - link

Google's usage of raw seawater for cooling of their data center in Hamina, Finland is pretty cool IMO. Given that the specific heat capacity of water is much higher than air's, it more efficient for cooling, especially in our climate where seawater is always relatively cold.JohanAnandtech - Tuesday, February 11, 2014 - link

I admit, I somewhat ignored the Scandinavian datacenters as "free cooling" is a bit obvious there. :-)I thought some readers would be surprised to find out that even in Sunny California free cooling is available most of the year.