Intel's Xeon E5-2600 V2: 12-core Ivy Bridge EP for Servers

by Johan De Gelas on September 17, 2013 12:00 AM ESTBenchmark Configuration & method

This review is mostly focused on performance. We have included the Xeon E5-2697 v2 (12 cores at 2.7-3.5GHz) and Xeon E5-2650L v2 (10 cores at 1.7GHz-2.1GHz) to categorize the performance of the high-end and lower-midrange new Xeons. That way, you can get an idea of where the rest of the 12 and 10 core Xeon SKUs will land. We also have the previous generation E5-2690 and E5-2660 so we can see the improvements from the new architecture. This also allows us to gauge how competitive the Opteron "Piledriver" 6300 is.

Intel's Xeon E5 server R2208GZ4GSSPP (2U Chassis)

| CPU |

Two Intel Xeon processor E5-2697 v2 (2.7GHz, 12c, 30MB L3, 130W) Two Intel Xeon processor E5-2690 (2.9GHz, 8c, 20MB L3, 135W) Two Intel Xeon processor E5-2660 (2.2GHz, 8c, 20MB L3, 95W) Two Intel Xeon processor E5-2650L v2 (1.7GHz, 10c, 25MB L3, W) |

| RAM |

64GB (8x8GB) DDR3-1600 Samsung M393B1K70DH0-CK0 or 128GB (8 x 16GB) Micron MT36JSF2G72PZ – BDDR3-1866 |

| Internal Disks | 2 x Intel MLC SSD710 200GB |

| Motherboard | Intel Server Board S2600GZ "Grizzly Pass" |

| Chipset | Intel C600 |

| BIOS version | SE5C600.86B (August the 6th, 2013) |

| PSU | Intel 750W DPS-750XB A (80+ Platinum) |

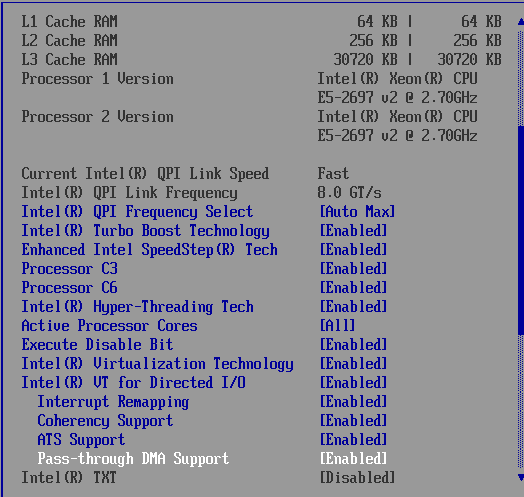

The Xeon E5 CPUs have four memory channels per CPU and support up to DDR3-1866, and thus our dual CPU configuration gets eight DIMMs for maximum bandwidth. The typical BIOS settings can be found below.

Supermicro A+ Opteron server 1022G-URG (1U Chassis)

| CPU |

Two AMD Opteron "Abu Dhabi" 6380 at 2.5GHz Two AMD Opteron "Abu Dhabi" 6376 at 2.2GHz |

| RAM | 64GB (8x8GB) DDR3-1600 Samsung M393B1K70DH0-CK0 |

| Motherboard | SuperMicro H8DGU-F |

| Internal Disks | 2 x Intel MLC SSD710 200GB |

| Chipset | AMD Chipset SR5670 + SP5100 |

| BIOS version | v2.81 (10/28/2012) |

| PSU | SuperMicro PWS-704P-1R 750Watt |

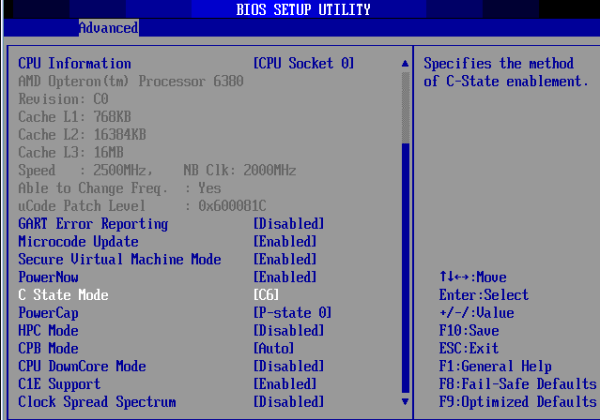

The same is true for the latest AMD Opterons: eight DDR3-1600 DIMMs for maximum bandwidth. You can check out the BIOS settings of our Opteron server below.

C6 is enabled, TurboCore (CPB mode) is on.

Common Storage System

To minimize different factors between our tests, we use our common storage system to provide LUNs via iSCSI. The applications are placed on a RAID-50 LUN of ten Cheetah 15k5 disks inside a Promise JBOD J300, connected to an Adaptec 5058 PCIe controller. For the more demanding applications (Zimbra, PhpBB), storage is provided by a RAID-0 of Micron P300 SSDs, with a 6 Gbps Adaptec 72405 PCIe raid controller.

Software Configuration

All vApus testing is done on ESXi vSphere 5 — VMware ESXi 5.1. All vmdks use thick provisioning, independent, and persistent. The power policy is "Balanced Power" unless otherwise indicated. All other testing is done on Windows 2008 Enterprise R2 SP1. Unless noted otherwise, we use the "High Performance" setting on Windows 2008 R2 SP1.

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) powerline. The room temperature is monitored and kept at 23°C by our Airwell CRACs. We use the Racktivity ES1008 Energy Switch PDU to measure power consumption. Using a PDU for accurate power measurements might seem pretty insane, but this is not your average PDU. Measurement circuits of most PDUs assume that the incoming AC is a perfect sine wave, but it never is. However, the Rackitivity PDU measures true RMS current and voltage at a very high sample rate: up to 20,000 measurements per second for the complete PDU.

70 Comments

View All Comments

mczak - Tuesday, September 17, 2013 - link

Yes that's surprising indeed. I wonder how large the difference in die size is (though the reason for two dies might have more to do with power draw).zepi - Tuesday, September 17, 2013 - link

How about adding turbo frequencies to sku-comparison tables? That'd make comparison of the sku's a bit easier as that is sometimes more repsentative figure depending on the load that these babies are run.JarredWalton - Tuesday, September 17, 2013 - link

I added Turbo speeds to all SKUs as well as linking the product names to the various detail pages at AMD/Intel. Hope that helps! (And there were a few clock speed errors before that are now corrected.)zepi - Wednesday, September 18, 2013 - link

Appreciated!zepi - Wednesday, September 18, 2013 - link

For most server buyers things are not this simple, but for armchair sysadmins this might do:http://cornflake.softcon.fi/export/ivyexeon.png

ShieTar - Tuesday, September 17, 2013 - link

"Once we run up to 48 threads, the new Xeon can outperform its predecessor by a wide margin of ~35%. It is interesting to compare this with the Core i7-4960x results , which is the same die as the "budget" Xeon E5s (15MB L3 cache dies). The six-core chip at 3.6GHz scores 12.08."What I find most interesting here is that the Xeon manages to show a factor 23 between multi-threaded and single-threaded performance, a very good scaling for a 24-thread CPU. The 4960X only manages a factor of 7 with its 12 threads. So it is not merely a question of "cores over clock speed", but rather hyperthreading seems to not work very well on the consumer CPUs in the case of Cinebench. The same seems to be true for the Sandy Bridge and Haswell models as well.

Do you know why this is? Is hyperthreading implemented differently for the Xeons? Or is it caused by the different OS used (Windows 2008 vs Windows 7/8)?

JlHADJOE - Tuesday, September 17, 2013 - link

^ That's very interesting. Made me look over the Xeon results and yes, they do appear to be getting close to a 100% increase in performance for each thread added.psyq321 - Tuesday, September 17, 2013 - link

Hyperthreading is the same.However, HCC version of IvyTown has two separate memory controllers, more features enabled (direct cache access, different prefetchers etc.). So it might scale better.

I am achieving 1.41x speed-up with dual Xeon 2697 v2 setup, compared to my old dual Xeon 2687W setup. This is so close to the "ideal" 1.5x scaling that it is pretty amazing. And, 2687w was running on a slightly higher clock in all-core turbo.

So, I must say I am very happy with the IvyTown upgrade.

garadante - Tuesday, September 17, 2013 - link

It's not 24 threads, it's 48 threads for that scaling. 2x physical CPUs with 12 cores each, for 24 physical cores and a total of 48 logical cores.Kevin G - Tuesday, September 17, 2013 - link

Actually if you run the numbers, the scaling factor from 1 to 48 threads is actually 21.9. I'm curious what the result would have been with Hyperthreading disabled as that can actually decrease performance in some instances.